AI Exam Security Is Promised. How Do You Know If It’s Actually Working?

- Apr 21

- 3 min read

Updated: Apr 24

Every credentialing director running remote exams faces the same challenge: AI proctoring has become the industry standard offering, but evaluating whether any given solution actually holds up is difficult. Vendors use similar language. Demos all look impressive. And the question of what happens when a result gets challenged — legally, procedurally, or by a disgruntled candidate — rarely comes up until it has to.

That gap between marketing claims and operational reality is worth closing before you’re in the room where it matters.

From flags to defensible records

Most remote proctoring systems use AI to flag anomalies — a face disappearing from frame, presence of a digital device, suspicious audio in the background. These are useful signals. But the meaningful question isn’t whether the AI is generating flags. It’s whether those flags translate into defensible cause of justifiable actions.

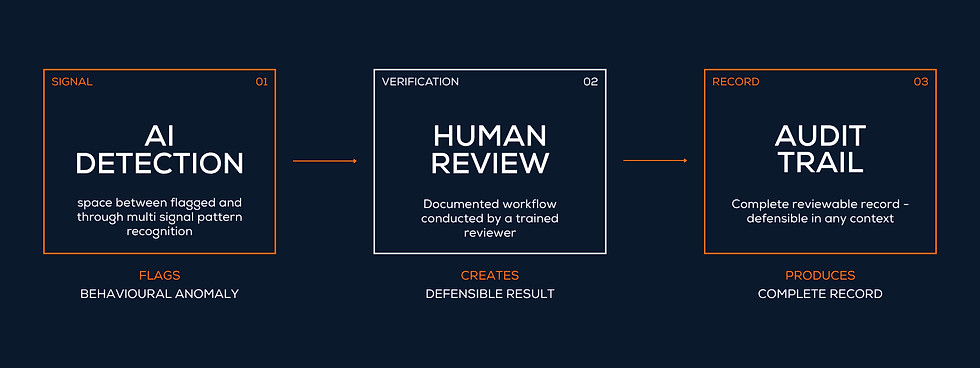

A flag alone is not evidence. What exam programs increasingly need — particularly as credential disputes become more common — is a system that connects automated detection to structured human review, backed by traceable evidence. AI flags and captures. Humans review and decide. Every step is logged, with full-length video preserved for audit. That chain is what makes a result defensible when it’s challenged.

It’s Not the Signal, It’s the Pattern

One of the more recent shifts in remote proctoring is moving beyond isolated events. For example, a candidate glancing away from the screen for a few seconds may mean very little on its own. An unusual item response pattern, in isolation, may tell nothing at all. However, when these signals are interpreted together and over time, they can reveal meaningful patterns. A combination of off-screen attention, irregular response timing, and repeated behaviors at specific moments may indicate something worth further review.

The value, therefore, lies not in any single anomaly detector, but in how multiple signals are interpreted in context when a decision needs to be made. This requires more than a list of flags. It requires a structured way to present and connect those signals. Through multi-dimensional data visualization, proctors and credential owners can see what happened, when it happened, and how different indicators relate to each other within the flow of the exam. Instead of reviewing isolated events, they are able to assess a coherent pattern of behavior.

This shifts the role of AI from simply flagging events to supporting informed human judgment — enabling decisions that are not only more reliable, but also defensible when reviewed.

Three questions to ask any vendor

These three questions move past the demo and get to what matters operationally. Vague answers are worth treating as ineligible.

ASK BEFORE YOU SIGN 1. What happens after an AI flag? You want a documented workflow — who reviews, what evidence they see, how decisions are made & recorded, and where that record is stored. A flag with no human oversight downstream is not a defensible process. 2. Does the system evaluate patterns, or just isolated events? Ask whether the system can interpret multiple signals in combination, and how those patterns are defined. Understanding the model — even at a high level — matters more than hearing a list of features. 3. Can you show a real audit trail? Request a sample from an actual exam? Time-stamped video, flagged event logs, reviewer decisions, final outcomes — this is the evidence base for any challenge. If it is not clear and complete, assume the documentation won’t hold up when it counts. |

The standard worth aiming for

The most defensible remote exam programs treat AI proctoring not as a detection tool but as an evidence-generation system — one that produces a complete, reviewable record of what happened, what was flagged, who reviewed it, and what was decided.

Platforms built to this standard, deployed across tens of millions of candidates, have shown that high-volume remote delivery and genuine security are not in tension. Getting there starts with knowing exactly what to ask for.

Comments